TIMCAN Project, Functional Testing Plan

Hanna Alatalo

Jarkko Kuivanen

Elisa Nauha

Jere Ojala

Kimmo Urtamo

Version 1.0.0

Public

5.6.2019

University of Jyväskylä

Faculty of Information Technology

Info

Title of the document: TIMCAN Project, Functional Testing Plan

File:

https://tim.jyu.fi/view/kurssit/tie/proj/2019/timcan/dokumentit/testaus/functional-testing-plan

Abstract: The functional testing plan of TIMCAN project describes what functionalities are to be tested, how testing is executed and how the testing shall be reported.

Keywords: Test plan, functional testing, TIM, testing, The Centre for Applied Language Studies, adaptive feedback, dynamic feedback, virtual learning environment, plugin.

Change History

| Version | Date | Changes | Author |

|---|---|---|---|

| 0.0.1 | 11.3.2019 | Created the template. | Jere Ojala |

| 0.0.2 | 21.3.2019 | Translated into english. | Jere Ojala |

| 0.0.3 | 25.3.2019 | Translated into english. | Jere Ojala |

| 0.0.4 | 1.4.2019 | Fixed the reference footnote links. | Jere Ojala |

| 0.0.5 | 4.4.2019 | Added test cases. | Jere Ojala |

| 0.0.6 | 5.4.2019 | Added test cases for building test and answering the built test. | Elisa Nauha |

| 0.0.7 | 5.4.2019 | Added test case for report exporting and fixed typos. | Jere Ojala |

| 0.0.8 | 9.4.2019 | Added test cases for task editing errors and cleaned up prior cases. | Elisa Nauha |

| 0.0.9 | 10.4.2019 | Added test cases for building tasks with errors. | Elisa Nauha |

| 0.0.10 | 11.4.2019 | Added test cases for exporting. | Jere Ojala |

| 0.0.11 | 11.4.2019 | Added test cases for tasks with errors and added test cases for drag-and-drop task. | Elisa Nauha |

| 0.0.12 | 12.4.2019 | Added test cases for exporting. | Jere Ojala |

| 0.0.13 | 15.4.2019 | Added test cases for exporting. | Jere Ojala |

| 0.0.14 | 16.4.2019 | Added objections, execution and test run. | Jere Ojala |

| 0.0.15 | 17.4.2019 | Edited task templates. | Elisa Nauha |

| 0.0.16 | 18.4.2019 | Corrected typos and other errors. | Jere Ojala |

| 0.0.17 | 23.4.2019 | Added test cases for new features. | Jere Ojala |

| 0.0.18 | 23.4.2019 | Edited task templates to match current. | Elisa Nauha |

| 0.1.0 | 24.4.2019 | Made preliminary fixes. | Jere Ojala |

| 0.1.1 | 25.4.2019 | Created templates for similar test cases. | Jere Ojala |

| 0.1.2 | 29.4.2019 | Made minor tweaks. | Jere Ojala |

| 0.1.3 | 6.5.2019 | Added information from obsolete test plan. | Jere Ojala |

| 0.1.4 | 6.5.2019 | Replaced the images in the test cases for building and answering test. | Elisa Nauha |

| 0.1.5 | 7.5.2019 | Fixed the test cases for errors. | Elisa Nauha |

| 0.1.6 | 9.5.2019 | Capitalized headers. | Jere Ojala |

| 0.2.0 | 12.5.2019 | Published for evaluation. | Jere Ojala |

| 0.2.1 | 15.5.2019 | Made fixes. | Jere Ojala |

| 0.2.2 | 22.5.2019 | Made fixes. | Jere Ojala |

| 0.3.0 | 22.5.2019 | Published for evaluation. | Jere Ojala |

| 0.3.1 | 28.5.2019 | Made fixes. | Jere Ojala |

| 0.4.0 | 28.5.2019 | Published for the project organization. | Jere Ojala |

| 1.0.0 | 5.6.2019 | The document was approved by the customer. | Kimmo Urtamo |

1. Target Application

The functionalities developed by TIMCAN project will replace already existing software called ICAnDoiT. ICAnDoiT is an application used by The Centre for Applied Language Studies for the purpose of studying adaptive feedback. Adaptive feedback is feedback that becomes gradually more detailed with every wrong response. The project team developed into the virtual learning environment called TIM a dropdown menu plugin, a drag-and-drop plugin, an adaptive feedback plugin and functionality for exporting the test results into a CSV file.

2. Target Features

Chapter describes the features developed in TIMCAN project that to be tested and the features which are excluded from testing.

2.1 Target Features

The target features are

- building a test,

- answering a test,

- exporting a report of the test,

- building a task with errors,

- a dropdown control,

- a radio selection and

- a drag-and-drop control.

2.2 Features Omitted from Testing

The features left outside of testing are

- test reporting from specified periods and

- validity filtering of the test reporting.

Date and time related features were omitted since they would require too much time between testing sessions. Dates and time commited to the database cannot be manually changed.

Filtering based on validity was omitted from the testing due to invalidating answers may not be possible within Feedback plugin.

3. Objectives of the Test Session and Methods of Execution

In the chapter the objectives and execution of the test session are described.

3.1 Objectives

In functional testing the most essential features are being tested with different inputs. The objective of this is to ensure that the system always functions in use the same way without errors.

3.2 Execution

Functional testing shall be executed as a black box testing manually. The testing data is choden using equivalence classes.

4. Test Run

Only the person who is executing the test shall participate in a test run. A tester should possess basic understanding of operating the Windows Operating System. The tester should also possess basic understanding of operating TIM system.

4.1 Testing Environment

For testing purpose a tester must have access to a computer with WWW browser and Internet connection.

4.2 Testing Report

The testing report should include from the test session its identification and summary.

The following information about the testing environment should be included to the testing session report:

- the software and version,

- the operating system and version,

- the browser and version,

- the working computer/device and version, and

- the test server of testing environment.

For identification of the testing session the following informations should be recorded:

- the name and version of the test plan,

- the test session participants,

- the test execution date and

- the tested test cases.

The summary should include

- the number of executed test cases,

- the number of test cases not executed,

- the number of each conclusion separately specified,

- exceptions from the test plan and their justifications and

- recommended procedures after the test session.

The conclusion of the test case must be one of the following:

- OK is the result when test case has succeeded with end result matching with the expected result.

- Error is the result when end result differs from the expected result or something generally harmful is noticed during the test run.

- Note is the result when the tester has noticed abnormality or suspicious in functionality.

In the case of Error and Note the observations should be reported with regarding details, such as procedures, input, abnormalities and possible error messages that led to the result.

The report of the test cases should be compiled on the following table form.

| Case ID | Task and input | Conclusion | Details |

|---|---|---|---|

| 2.2 | Correct streak | Note | OK button doesn't work, only the link works. |

4.3 Identification of the Test Cases

The numbering of the test cases should be in form of [Case ID].[Subcase ID].

Case IDs by task titles are listed in the following table.

| Task | Case ID |

|---|---|

| Teacher building a test | 1 |

| Student answering a test | 2 |

| Report exporting of test | 3 |

| Building task with errors | 4 |

5. Test Cases for Building a Test

The test cases 1.1-1.4 tests successful building of the test with tasks.

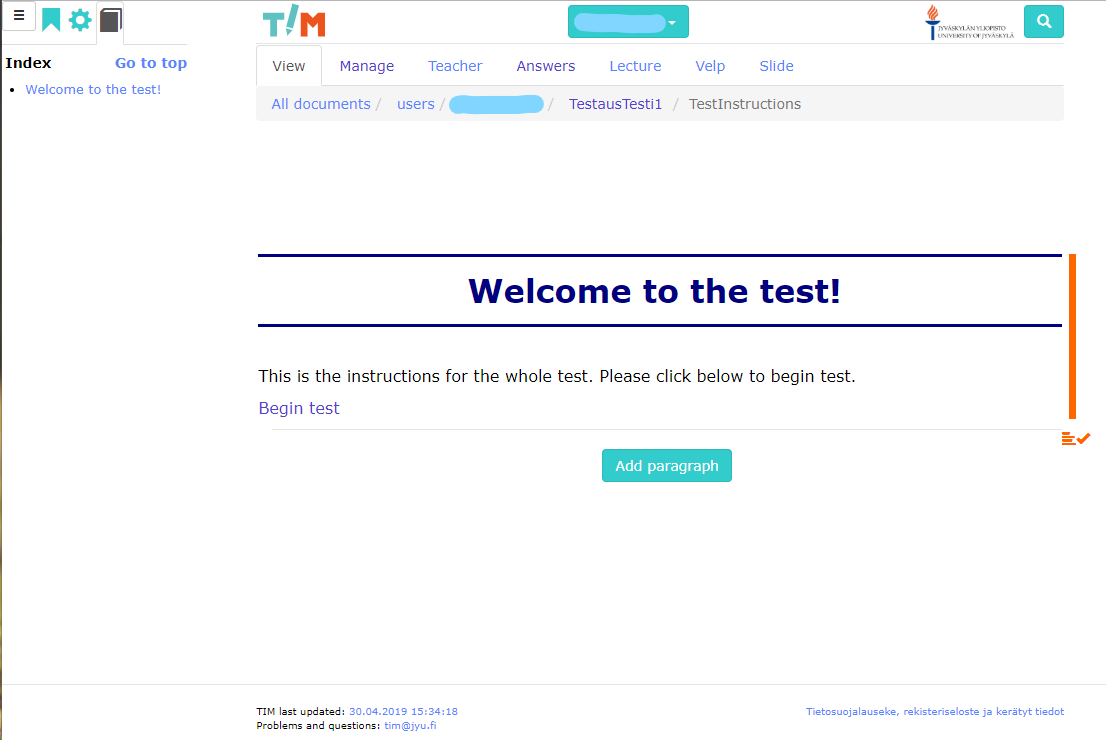

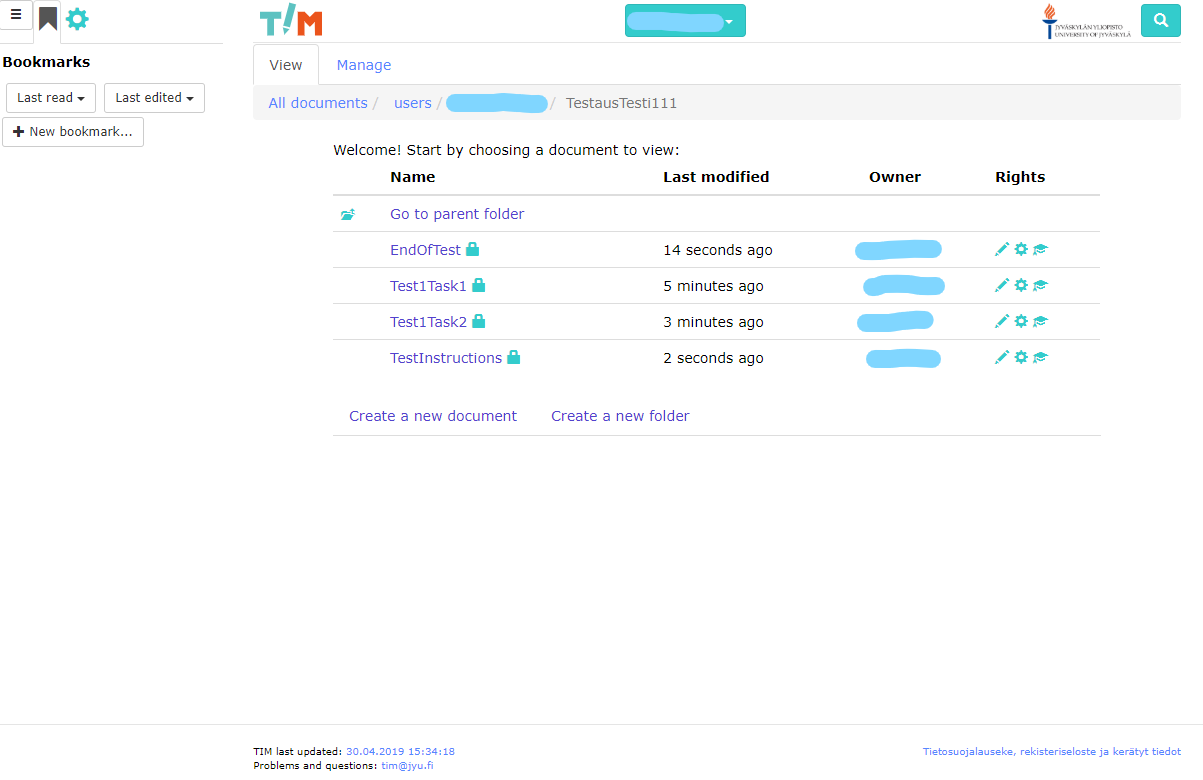

5.1 Building a Test, Instruction Page

Test case: 1.1

Initial state: The user is signed in to TIM development machine.

Steps:

- Create a new folder in your documents called

TestausTesti1. - In Manage mode set Default rights for new documents in the folder to View for Logged-in Users.

- In the folder create a document called

TestInstructions. - Click Add Paragraph and copy the instructions markup (see Test data) and click Save.

Test data:

# Welcome to the test!

This is the instructions for the whole test. Please click below to begin test.

[Begin test](test1task1)Expected result:

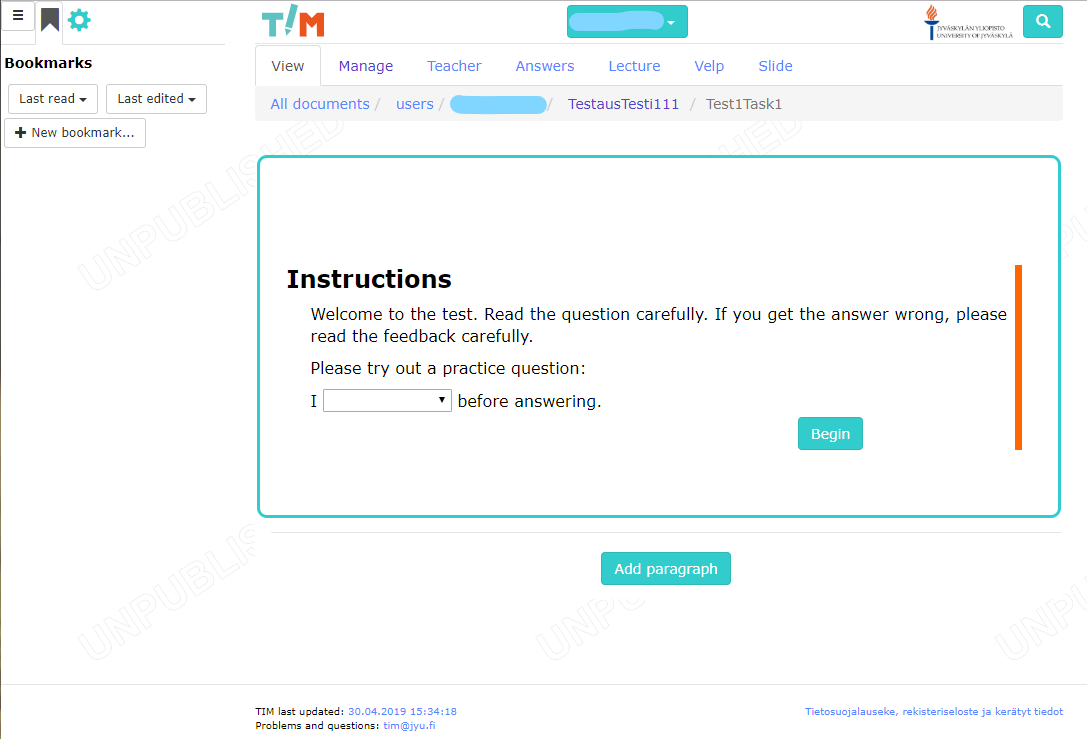

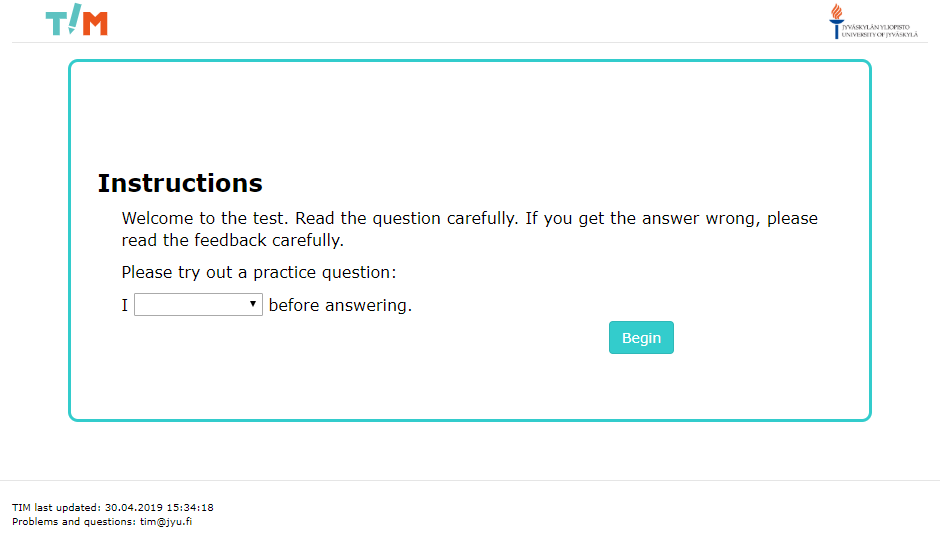

5.2 Building a Test, Task 1 with dropdown questions

Test case: 1.2

Initial state: The user is signed in to TIM development machine and has done the test case 1.1.

Steps:

- Go to the folder

TestausTesti1in your documents. - In the folder create a document called

test1task1. - Go to the Manage mode and copy the task markup from Appendix 1 into the Edit the full document box and click Save.

- Go to the View mode.

- In the View mode left click on the left side of the feedback paragraph and edit the paragraph.

- Find the line

nextTask:and write in"[Task 2](test1task2)".

Test data: See Appendix 1

Expected result: The task is ready and looks like so:

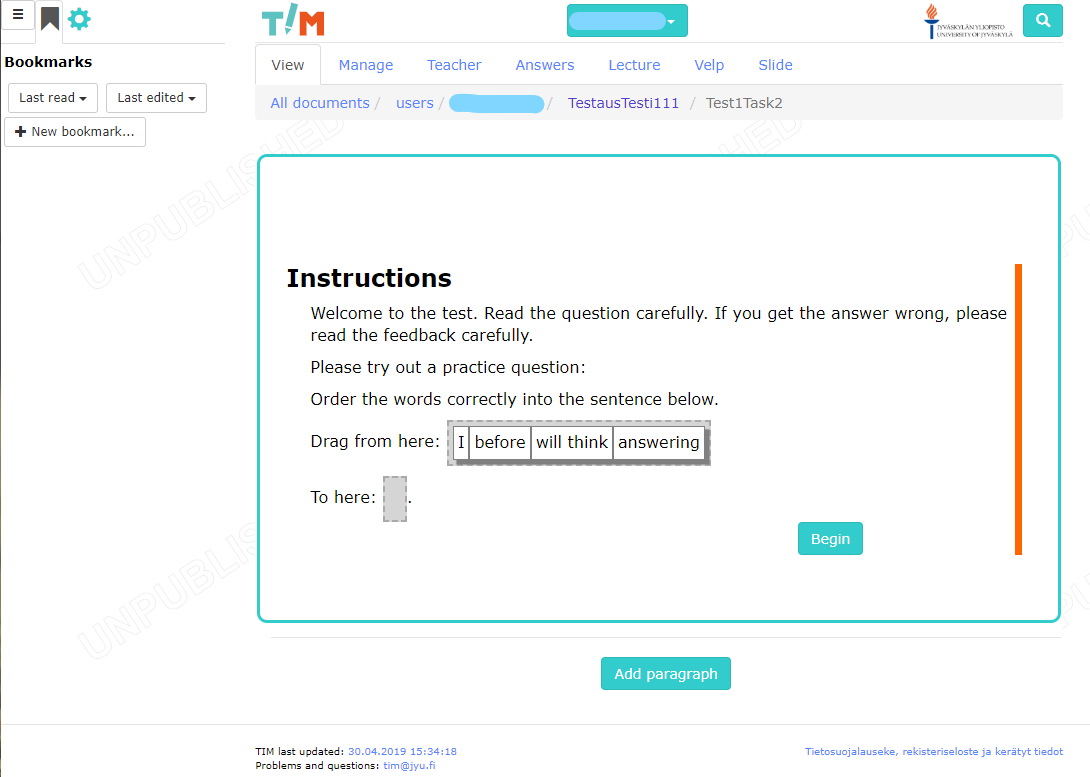

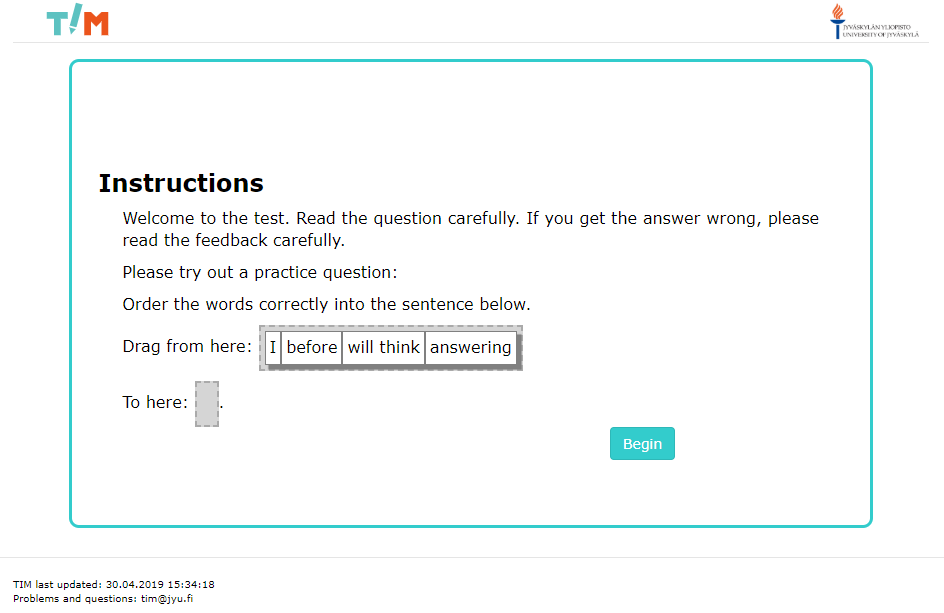

5.3 Building a Test, Task 2 with drag&drop questions

Test case: 1.3

Initial state: The user is signed in to TIM development machine and has excecuted the test case 1.2.

Steps:

- Go to the folder

TestausTesti1in your documents. - In the folder create a document called

Test1Task2. - Go to the Manage mode and copy the task markup from Appendix 2 into the Edit the full document box and click Save.

- Go to the View mode.

- In the View mode left click on the left side of the feedback paragraph and edit the paragraph.

- Find the line

nextTask:and write in"[End of test](endoftest)".

Test data: See Appendix 2.

Expected result: The task is ready and looks like so:

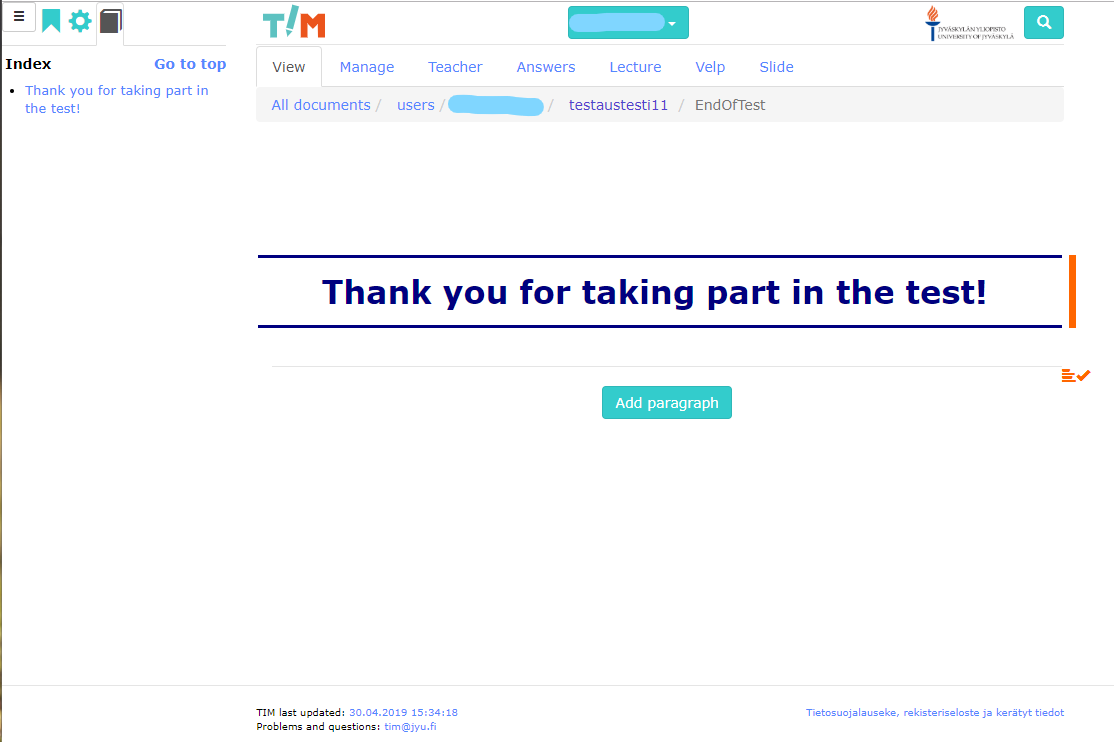

5.4 Building a Test, End Page of Test

Test case: 1.4

Initial State: The test cases 1.1-1.3 have been completed successfully

Steps:

- Go to the folder.

- Create new document called

EndOfTest. - Add a paragraph with the test data.

- Go to View mode.

Test Data:

# Thank you for taking part in the test!

Expected Result:

In View mode the page should look like below:

The test folder should look like below:

6. Test Cases for Student Answering the Test

Test case 2.1-2.4 tests successful answering to the test created in the test cases 1.1-1.4 as a student.

6.1 Beginning a Test as Student

Test case: 2.1

Initial State: The test has been successfully built in the test cases 1.1-1.4.

Steps:

- Sign in to TIM as the test user with the username

test1@example.comand the passwordtest1. - Go to the page of the test

https://timdevs01-2.it.jyu.fi/view/users/*username*/testaustesti1/testinstructions.

Test Data:

Expected Result: The instruction page for the test is visible.

6.2 Answering the Task until Stopping Condition for Correct Streak is Reached

Test case: 2.2

Initial State: The test cases 2.1 has been successfully completed.

Steps:

| Step | Expected result: |

|---|---|

| 1. Click Begin test. | The first task page with instructions should appear. |

| 2. Answer practice item and click OK. | The first item should appear. |

3. Answer first item do ... and click OK. |

The level 1 feedback should appear. |

| 4. Click OK on feedback. | The next item should appear. |

5. Answer item are ... and click OK. |

The level 2 feedback should appear. |

| 6. Click OK on feedback. | The next item should appear. |

7. Answer item is ... and click OK. |

The correct answer feedback should appear. |

| 8. Click OK on feedback. | The next item should appear. |

9. Answer item is ... and click OK. |

The correct answer feedback should appear. |

| 10. Click OK on feedback. | The end of task should appear with a hyperlink. |

Test Data: See individual steps for data.

Expected Result: See the image below and the individual steps for expected results.

6.3 Answering the Task Until Stopping Condition for Final Feedback Level is Reached

Test case: 2.3

Initial State: The test cases 2.1-2.2 have been successfully completed.

Steps:

| Step | Expected result: |

|---|---|

| 1. Click the link to next task. | The task 2 should appear. |

| 2. Answer the practice item and click OK. | The first item should appear. |

| 3. Answer the first item wrong and click OK. | The level 1 feedback should appear. |

| 4. Click OK on feedback. | The next item should appear. |

| 5. Answer the item wrong and click OK. | The level 2 feedback should appear. |

| 6. Click OK on feedback. | The next item should appear. |

| 7. Answer the item wrong and click OK. | The level 3 feedback should appear. |

| 8. Click OK on feedback. | The next item should appear. |

| 9. Answer the item wrong and click OK. | The level 4 feedback should appear. |

| 10. Click OK on feedback. | The next item should appear. |

| 11. Answer the item wrong and click OK. | Level 5 feedback should appear |

| 12. Click OK on feedback. | The end of task should appear with a hyperlink |

| 13. Click the link. | The end of test page should appear |

Test Data: Wrong answers for the questions in task 2 include

if run I a mile,who at work I see,had whether I a computer, andwhen come I around.

Expected Result: See image below and the individual steps for expected results.

6.4 Answering the Test and Not Choosing Options

Test case: 2.4

Initial State: The test cases 2.1-2.3 have been successfully completed.

Steps:

| Step | Expected result: |

|---|---|

1. Sign in as another test user with the username test2@example.com and the password test2. |

|

2. Go to the page of the test https://timdevs01-2.it.jyu.fi/ view/users/*username*/testaustesti1/testinstructions. |

|

| 3. Click Begin test. | |

| 4. Do not choose answer for the practice item and click OK. | A note asking to choose an answer should appear. |

| 5. Choose an answer for the practice item and click OK. | The first item should appear. |

| 6. Do not choose any answer for the item and click OK. | A note asking to choose an answer should appear. |

7. Answer the item is ... and click OK. |

The correct answer feedback should appear. |

| 8. Click OK on feedback. | The next item should appear. |

9. Answer the item is ... and click OK. |

The correct answer feedback should appear. |

| 10. Click OK on feedback. | The end of task should appear. |

| 11. Click the link to the next task. | The next task should appear. |

| 12. Do not choose any answer for the practice item and click OK. | A note asking to choose an answer should appear. |

| 13. Answer the practice item and click OK. | The first item should appear. |

| 14. Do not choose any answer and click OK. | A note asking to choose an answer should appear. |

| 15. Answer the item right (see test data) and click OK. | The correct answer feedback should appear. |

| 16. Click OK on the feedback. | The next item should appear. |

| 17. Answer the item right (see test data) and click OK. | The correct answer feedback should appear. |

| 18. Click OK on feedback. | The end of task should appear with a hyperlink. |

| 19. Click the link. | The end of test should appear. |

Test Data: The right answers for the questions in task 2 are

if I run a mile,who I see at work,whether I had a computer, andwhen I come around.

Expected Result: See the individual steps for expected results.

7. Test Cases for Report Exporting

The test cases 3.1-3.13 tests successful exporting of the test results with various options.

7.1 Template for Test Cases 3.1-3.7

The test cases 3.1-3.7 all follow this template but with individual test data and final expected results.

Test cases: 3.1-3.7

Initial State: The test cases 1.1-2.4 have been successfully completed.

Steps:

| Step | Expected result: |

|---|---|

| 1. Sign in as the user who created the test. | |

2. Go to the page of the test https://timdevs01-2.it.jyu.fi/answers/users /*username*/testaustesti1/test1task1. |

|

| 3. Expand the dialog 2 users with answers if it is substracted. | |

| 4. Open a dropdown menu located in the upper-right corner of the dialog 2 users with answers. | Create Feedback Report button option should be in the dropdown menu. |

| 5. Click Feedback answer report button. | Export to csv window should appear. |

6. Select the corresponding options from Export Options (see Test Data in the Test Case). |

Radiobuttons are selected accordingly with only one selected per option. |

| 7. Click Get Answers button. | A new tab will appear with the test data as .txt format. Final expected result depends on each individual test case. |

7.2 Exporting Test Results with Default Options

Test case: 3.1

Initial State: See Template for test cases 3.1-3.7.

Steps: See Template for test cases 3.1-3.7.

Test Data:

Export Options:

Period: Whenever

Validity: Valid

Names: Username and fullname

Scope: Only this task

Answers From: All users

Delimiter: Semicolon

Decimal: Decimal point

Expected Result:

The report should only include answers from test1task1 document. The names should include both the username and the full name. The headers should include both the username and the full name. The delimiters should be separated with semicolon. The time should be displayed with decimal point.

7.3 Exporting Results of the Whole Test and with Only Username

Test case: 3.2

Initial State: See Template for test cases 3.1-3.7.

Steps: See Template for test cases 3.1-3.7.

Test Data:

Export Options:

Period: Whenever

Validity: Valid

Names: Username only

Scope: The whole test

Answers From: All users

Delimiter: Semicolon

Decimal: Decimal point

Expected Result:

The report should include answers from the whole test folder. The full name should be not displayed neither the respectful header.

7.4 Exporting Results of the Whole Test and with Anonymous Username

Test case: 3.3

Initial State: See Template for test cases 3.1-3.7.

Steps: See Template for test cases 3.1-3.7.

Test Data:

Export Options:

Period: Whenever

Validity: Valid

Names: Anonymous username

Scope: The whole test

Answers from: All users

Delimiter: Semicolon

Decimal: Decimal point

Expected Result:

The report should include answers from the whole test folder. At the header the username should display users anonymously, as in user1 and user2.

7.5 Exporting Test Results with Tab-Delimiters

Test case: 3.4

Initial State: See Template for test cases 3.1-3.7.

Steps: See Template for test cases 3.1-3.7.

Test Data:

Export Options:

Period: Whenever

Validity: Valid

Names: Anonymous username

Scope: The whole test

Answers From: All users

Delimiter: Tab

Decimal: Decimal point

Expected Result:

The report should include answers from the whole test folder. The delimiters should be tabulators. Only anonymous username is displayed.

7.6 Exporting Test Results with Vertical Bar-Delimiters

Test case: 3.5

Initial State: See Template for test cases 3.1-3.7.

Steps: See Template for test cases 3.1-3.7.

Test Data:

Export Options:

Period: Whenever

Validity: Valid

Names: Anonymous username

Scope: The whole test

Answers From: All users

Delimiter: Vertical bar

Decimal: Decimal point

Expected Result:

The report should include answers from the whole test folder. The delimiters should be vertical bars. Only username is displayed in headers.

7.7 Exporting Test Results with Comma-Delimiters

Test case: 3.6

Initial State: See Template for test cases 3.1-3.7.

Steps: See Template for test cases 3.1-3.7.

Test Data:

Export Options:

Period: Whenever

Validity: Valid

Names: Anonymous username

Scope: The whole test

Answers From: All users

Delimiter: Comma

Decimal: Decimal point

Expected Result:

The report should include answers from the whole test folder. The delimiters should be commas. Only the username is displayed.

7.8 Exporting Test Results with Decimal Comma

Test case: 3.7

Initial State: See Template for test cases 3.1-3.7.

Steps: See Template for test cases 3.1-3.7.

Test Data:

Export Options:

Period: Whenever

Validity: Valid

Names: Anonymous username

Scope: The whole test

Answers From: All users

Delimiter: Comma

Decimal: Decimal comma

Expected Result:

The report should include answers from the whole test folder. The time should be displayed with decimal commas with quotation marks. Only the username is displayed.

7.9 Template for Test Cases 3.8-3.12

The test cases 3.8-3.12 all follow this template but with individual test data and final expected results.

Test cases: 3.8-3.12

Initial State: The test cases 1.1-2.4 have been successfully completed.

Steps:

| Step | Expected result: |

|---|---|

| 1. Sign in as the user who created the test. | |

2. Go to the page of the test https://timdevs01-2.it.jyu.fi/ answers/users/*username*/testaustesti1/test1task1. |

|

| 3. Expand the dialog 2 users with answers if it is substracted. | |

| 4. Test data varies by the test case. | |

| 5. Open the dropdown menu located in the upper-right corner of the dialog 2 users with answers. | Create Feedback Report button option should be in the dropdown menu. |

| 6. Click Create Feedback Report button. | Export to csv window should appear. |

| 7. Select corresponding options from Export Options (see Test Data in the test cases). | Radiobuttons are selected accordingly with only one selected per option. |

| 8. Click Get Answers button. | A new tab will appear with test data in as txt format. Final expected result depends on each individual test case. |

7.10 Exporting Test Results with Only One Visible User

Test case: 3.8

Initial State: See Template for test cases 3.8-3.12.

Steps: See Template for test cases 3.8-3.12.

Test Data:

Step 4: Enter the text field Full Name and input user 1.

Export Options:

Period: Whenever

Validity: Valid

Names: Anonymous username

Scope: The whole test

Answers From: Only visible users

Delimiter: Comma

Decimal: Decimal comma

Expected Result:

Step 4: The user list should only display Test user 1.

Final Result: The report should include answers from the whole test folder. The report should only include answers from Test user 1.

7.11 Exporting Test Results with Two Visible Users

Test case: 3.9

Initial State: See Template for test cases 3.8-3.12.

Steps: See Template for test cases 3.8-3.12.

Test Data:

Step 4: Enter the text field Full Name and input test.

Export Options:

Period: Whenever

Validity: Valid

Names: Anonymous username

Scope: The whole test

Answers From: Only visible users

Delimiter: Comma

Decimal: Decimal comma

Expected Result:

Step 4: The user list should both display Test user 1 and Test user 2

Final result:

The report should include answers from the whole test folder. The report should include answers from both Test user 1 and Test user 2.

7.12 Exporting Test Results with Only Visible Users when Visible User List is Empty

Test case: 3.10

Initial State: See Template for test cases 3.8-3.12.

Steps: See Template for test cases 3.8-3.12.

Test Data:

Step 4: Enter the text field Full Name and input k

Export Options:

Period: Whenever

Validity: Valid

Names: Anonymous username

Scope: The whole test

Answers From: Only visible users

Delimiter: Comma

Decimal: Decimal comma

Expected Result:

Step 4: The user list should be empty.

Final result: The report should only include row of headers and no user data. Full Name header should not be included.

7.13 Exporting Test Results with Only Selected User

Test case: 3.11

Initial State: See Template for test cases 3.8-3.12.

Steps: See Template for test cases 3.8-3.12.

Test Data:

Step 4: Select Test user 2 from the user list.

Export Options:

Period: Whenever

Validity: Valid

Names: Anonymous username

Scope: The whole test

Answers From: Only selected user

Delimiter: Comma

Decimal: Decimal comma

Expected Result:

Step 4: The user list should have Test user 2 highlighted.

Final Result: The report should include answers from the whole test folder. The report only include answers from Test user 2.

7.14 Exporting Test Results with Only Selected User when Visible User List is Empty

Test case: 3.12

Initial State: See Template for test cases 3.8-3.12.

Steps: See Template for test cases 3.8-3.12.

Test Data:

Step 4.1: Select Test user 2 from user list.

Step 4.2: Enter the text field Full Name and type foobar.

Export Options:

Period: Whenever

Validity: Valid

Names: Anonymous username

Scope: The whole test

Answers From: Only selected user

Delimiter: Comma

Decimal: Decimal comma

Expected Result:

Step 4.1: The user list should have Test user 2 highlighted.

Step 4.2: The user list should be empty.

Final Result: The report should only include row of headers and no user data. Full Name header should not be included.

7.15 Canceling Exporting

Test case: 3.13

Initial State: Test cases 1.1-2.4 have been successfully completed.

Steps:

| Step | Expected result: |

|---|---|

| 1. Sign in as the user who created the test. | |

2. Go to the page of the test https://timdevs01-2.it.jyu.fi/answers/ users/*username*/testaustesti1/test1task1. |

|

| 3. Expand the dialog 2 users with answers if it is substracted. | |

4. Open the dropdown menu located in the upper-right corner of the dialog 2 users with answers. |

Create Feedback Report button option should be in the dropdown menu. |

| 5. Click Create Feedback Report button. | Export to csv window should appear. |

| 6. Click Cancel button. | Dialog window should close with no further operations. |

Expected Result: See individual steps for expected results.

8. Building Task with Errors

The test cases 4.1-4.7 tests error handling of the test creation.

8.1 Building Task with Varying Number of Feedback Levels

Test case: 4.1

Initial State: A folder called TestausTesti1 has been created.

Steps:

- Go to folder

TestausTesti1in your documents. - In the folder create a document called

Test1Task3. - Go to the Manage mode and copy the task markup from Appendix 1 into the Edit the full document box and click Save.

- Go to View mode and click to edit the feedback paragraph.

- Delete a feedback level from one match option such as:

- "Level 2 feedback: You answered: *|answer|* Answer is wrong. Try thinking *a bit* harder. " - Click Save. The expected result is the appearance of a red warning:

Different number of feedback levels. - Go to View mode and click to edit the feedback paragraph.

- Paste the deleted feedback level back in the right place.

- Click Save. Expected result is that error disappears.

Test Data: See individual steps for data.

Expected Result: In step 6 an error should appear and be recovered from in step 9.

8.2 Building Task with No Link to Next Task or End of Test

Test case: 4.2

Initial State: An errorless task Test1Task3 has been created (test case 4.1).

Steps:

- Click to edit the feedback paragraph of document

Test1Task3. - Delete the input of

nextTask - Click Save. The expected result is the appearance of a red warning

The following fields have invalid values: nextTask: Field may not be null. - Click to edit the feedback paragraph.

- Reinput "link" into the

nextTaskfield. - Click Save. The expected result is that error should disappear.

Test Data: See individual steps for data.

Expected Result: In step 3 an error should be displayed and it should be recovered from in step 6.

8.3 Building Task with No Instruction Block Defined

Test case: 4.3

Initial State: An errorless task Test1Task3 has been created (test case 4.1).

Steps:

- Click to edit the instructions paragraph of document

Test1Task3. - Delete the instruction paragraph ID

.instructionand click Save. - Click to edit the feedback paragraph and click Save. The expected result is the appearance of a red warning

Instruction not defined. - Click to edit the instructions paragraph.

- Reinput the instruction plugin and click Save.

- Click to edit the feedback paragraph and click Save. The expected result is that the error should disappear.

Test Data: See individual steps for data.

Expected Result: In step 3 an error should be displayed and it should be recovered from in step 6.

8.4 Building Task with No Right Answer Defined

Test case: 4.4

Initial State: An errorless task Test1Task3 has been created (test case 4.1).

Steps:

- Click to edit the feedback paragraph of document

Test1Task3. - Delete the lines:

- match: [is baking]

correct: true

levels: *ismatch- Click Save. The expected result is the appearance of a red warning

No correct answer defined for question item.. - Click to edit the feedback paragraph.

- Reinput the deleted text.

- Click Save. the expected result is that the error should disappear.

Test Data: See individual steps for data.

Expected Result: In step 3 an error should be displayed and it should be recovered from in step 6.

8.5 Building Task with Wrong Names for Plugins

Test case: 4.5

Initial State: An errorless task Test1Task3 has been created (test case 4.1).

Steps:

- Click to edit the feedback paragraph of document

Test1Task3. - Change the pluginname

drop1on line 7 tonodrop1. - Click Save. The expected result is the appearance of a red warning

No plugin with such a name (nodrop1) or missing setPluginWords-method. - Click to edit the feedback paragraph.

- Change the pluginname

nodrop1on line 7 back todrop1. - Click Save. The expected result is that the error should disappear.

Test Data: See individual steps for data.

Expected Result: In step 3 an error should be displayed and it should be recovered from in step 6.

8.6 Building Task with No Default Feedback

Test case: 4.6

Initial State: An errorless task Test1Task3 has been created (test case 4.1).

Steps:

- Click to edit the feedback paragraph of document

Test1Task3. - Delete lines:

- match: []

levels: *defaultmatch- Click Save. The expected result is the appearance of a red warning:

Default feedback option needed. - Click to edit the feedback paragraph.

- Reinput the deleted text.

- Click Save. the expected result is that the error should disappear.

Test Data: See individual steps for data.

Expected Result: In step 3 an error should be displayed and it should be recovered from in step 6.

8.7 Building Task with No Feedback

Test case: 4.7

Initial State: An errorless task Test1Task3 has been created (test case 4.1).

Steps:

- Click to edit the feedback paragraph of document

Test1Task3. - Delete lines:

choices:

- match: [is baking]

correct: true

levels: *ismatch

- match: [do baking]

levels: *domatch

- match: [are baking]

levels: *arematch

- match: []

levels: *defaultmatch- Click Save. The expected result is a YAML error.

- Click to edit the feedback paragraph.

- Reinput the deleted text.

- Click Save. The expected result is that a YAML error should disappear.

Test Data: See individual steps for data.

Expected Result: In step 3 an error should be displayed and it should be recovered from in step 6.

Appendices

Appendix 1: Template for Dropdown Task Markup

``` {settings=""}

hide_links: view

hide_top_buttons: view

css: |!!

.paragraphs {

display: none;

}

!!

```

#- {area="dropdowntask1" .task}

## Instructions {.instruction defaultplugin="dropdown"}

Welcome to the test. Read the question carefully.

If you get the answer wrong, please read the feedback carefully.

Please try out a practice question:

I {#practice words: [will think, won't think, might think]} before answering.

## Item: {defaultplugin="dropdown"}

What {#dropdown1} on the stove?

## Item: {defaultplugin="dropdown"}

Who {#dropdown2} the cake?

## Item: {defaultplugin="dropdown"}

What {#dropdown3} on the roof?

## Item: {defaultplugin="dropdown"}

Who {#dropdown4} the 3 mile swim in the race?

``` {#fb1 plugin="feedback"}

correctStreak: 2

nextTask: nonexttaskdefined

questionItems:

- pluginNames: [dropdown1]

words: [[is cooking, do cooking, are cooking]]

choices:

- match: [is cooking]

correct: true

levels: &ismatch

- "**Correct!** You answered: |answer|"

- match: [do cooking]

levels: &domatch

- "Level 1 feedback: You answered: *|answer|* Answer is wrong.

- Try **thinking**. "

- "Level 2 feedback: You answered: *|answer|* Answer is wrong.

- Try thinking *a bit* harder. "

- "Level 3 feedback: You answered: *|answer|* Answer is wrong.

- Try thinking **much more** harder. "

- "Level 4 feedback: You answered: *|answer|* Answer is wrong.

- Just think about what the answer **is**. "

- "Level 5 feedback: Please note the correct answer: 'What **is** / Who **is**'"

- match: [are cooking]

levels: &arematch

- "Level 1 feedback: You answered: *|answer|* Answer is wrong.

- Try **thinking**. "

- "Level 2 feedback: You answered: *|answer|* Answer is wrong.

- Try thinking *a bit* harder. "

- "Level 3 feedback: You answered: *|answer|* Answer is wrong.

- Try thinking **much more** harder. "

- "Level 4 feedback: You answered: *|answer|* Answer is wrong.

- Just think about what the answer **is**. "

- "Level 5 feedback: Please note the correct answer: 'What **is** / Who **is**'"

- match: []

levels: &defaultmatch

- "Level 1 feedback: default feedback for drop4"

- "Level 2 feedback: default feedback for drop4"

- "Level 3 feedback: default feedback for drop4"

- "Level 4 feedback: default feedback for drop4"

- "Level 5 feedback: Please note the correct answer: 'What **is** / Who **is**'"

- pluginNames: [dropdown2]

words: [[is baking, do baking, are baking]]

choices:

- match: [is baking]

correct: true

levels: *ismatch

- match: [do baking]

levels: *domatch

- match: [are baking]

levels: *arematch

- match: []

levels: *defaultmatch

- pluginNames: [dropdown3]

words: [[is jumping, do jumping, are jumping]]

choices:

- match: [is jumping]

correct: true

levels: *ismatch

- match: [do jumping]

levels: *domatch

- match: [are jumping]

levels: *arematch

- match: []

levels: *defaultmatch

- pluginNames: [dropdown4]

words: [[is swimming, do swimming, are swimming]]

choices:

- match: [is swimming]

correct: true

levels: *ismatch

- match: [do swimming]

levels: *domatch

- match: [are swimming]

levels: *arematch

- match: []

levels: *defaultmatch

```

#- {area_end="dropdowntask1"}Appendix 2: Template for Drag & Drop Task Markup

``` {settings=""}

hide_links: view

hide_top_buttons: view

css: |!!

.paragraphs {

display: none;

}

!!

```

#- {area="dragtask1" .task}

## Instructions {.instruction defaultplugin="drag"}

Welcome to the test. Read the question carefully.

If you get the answer wrong, please read the feedback carefully.

Please try out a practice question:

Order the words correctly into the sentence below.

Drag from here: {#practice1 words: [I, before, will think, answering]}

To here: {#practice2}.

## Item {defaultplugin="drag"}

::: {.info}

Please order the words correctly into the sentence below.

{#drag1 words: [I, when, around, come]}

:::

You know where I'll be found {#drop1}.

## Item {defaultplugin="drag"}

::: {.info}

Please order the words correctly into the sentence below.

{#drag2 words: [I, if, a mile, run]}

:::

I will be quite tired {#drop2}.

## Item {defaultplugin="drag"}

::: {.info}

Please order the words correctly into the sentence below.

{#drag3 words: [I, who, at work, see]}

:::

I will tell you {#drop3}.

## Item {defaultplugin="drag"}

::: {.info}

Please order the words correctly into the sentence below.

{#drag4 words: [I, whether, a computer, had]}

:::

He wanted to know {#drop4}.

``` {#fb1 plugin="feedback"}

correctStreak: 2

nextTask: nonexttaskdefined

questionItems:

- pluginNames: [drop1]

dragSource: drag1

words: []

choices:

- match: [when I come around]

correct: true

levels: &rightmatch

- "**Correct!** You answered: |answer|"

- match: [when around I come]

levels: &asvmatch

- "Level 1 feedback: You answered: *|answer|* Answer is wrong.

- Try **thinking**. "

- "Level 2 feedback: You answered: *|answer|* Answer is wrong.

- Try thinking *a bit* harder. "

- "Level 3 feedback: You answered: *|answer|* Answer is wrong.

- Try thinking **much more** harder. "

- "Level 4 feedback: You answered: *|answer|* Answer is wrong.

- Just think about **what the answer is**. "

- "Level 5 feedback: Please note the correct word order:

- 'conjuction subject verb the rest'"

- match: [when come I around]

levels: &vsamatch

- "Level 1 feedback: You answered: *|answer|* Answer is wrong.

- Try **thinking**. "

- "Level 2 feedback: You answered: *|answer|* Answer is wrong.

- Try thinking *a bit* harder. "

- "Level 3 feedback: You answered: *|answer|* Answer is wrong.

- Try thinking **much more** harder. "

- "Level 4 feedback: You answered: *|answer|* Answer is wrong.

- Just think about **what the answer is** "

- "Level 5 feedback: Please note the correct word order:

- 'conjuction subject verb the rest'"

- match: []

levels: &defaultmatch

- "Level 1 feedback: You answered: *|answer|* Answer is wrong.

- Try **thinking**."

- "Level 2 feedback: You answered: *|answer|* Answer is wrong.

- Think about what comes first."

- "Level 3 feedback: You answered: *|answer|* Answer is wrong.

- Think about what word comes first."

- "Level 4 feedback: You answered: *|answer|* Answer is wrong.

- The conjunction should come first."

- "Level 5 feedback: Please note the correct word order:

- 'conjuction subject verb the rest'"

- pluginNames: [drop2]

dragSource: drag2

words: []

choices:

- match: [if I run a mile]

correct: true

levels: *rightmatch

- match: [if a mile I run]

levels: *asvmatch

- match: [if run I a mile]

levels: *vsamatch

- match: []

levels: *defaultmatch

- pluginNames: [drop3]

dragSource: drag3

words: []

choices:

- match: [who I see at work]

correct: true

levels: *rightmatch

- match: [who at work I see]

levels: *asvmatch

- match: [who see I at work]

levels: *vsamatch

- match: []

levels: *defaultmatch

- pluginNames: [drop4]

dragSource: drag4

words: []

choices:

- match: [whether I had a computer]

correct: true

levels: *rightmatch

- match: [whether a computer I had]

levels: *asvmatch

- match: [whether had I a computer]

levels: *vsamatch

- match: []

levels: *defaultmatch

```

#- {area_end="dragtask1"}These are the current permissions for this document; please modify if needed. You can always modify these permissions from the manage page.